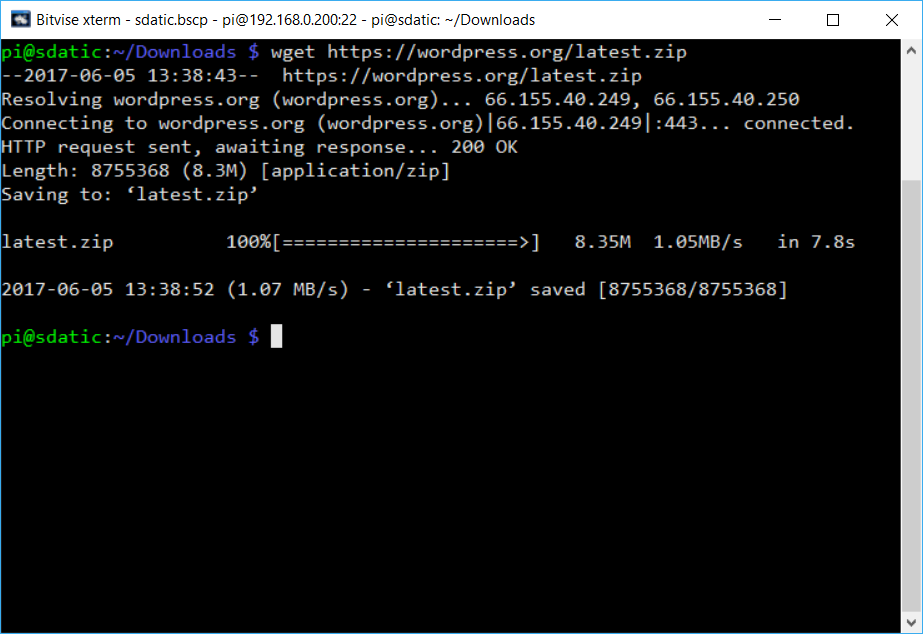

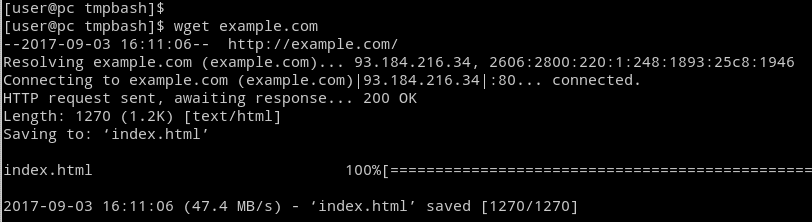

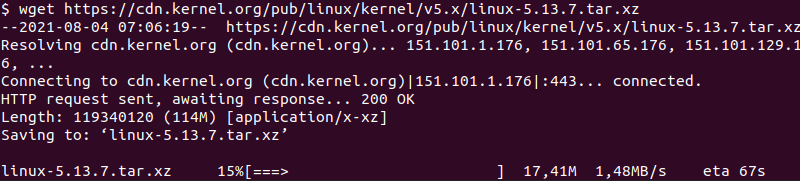

It is a free tool that supports http, https and ftp protocols, and http proxies for downloading any file. Wget -q -nH -no-check-certificate -cut-dirs=5 -r -l0 -c -N -np -R 'index*' -erobots=off -retr-symlinks -i textfile. wget command is used on Linux to download files from the web. ) you can wirte them in you textfile, and the use that textfile as an input for wget. Or you could do something more clever, but maybe not that useful for this usecase: There is a very simple solution and a simple solution.Īs berndbausch said, you could either just run bash filename.txt as shellscripts are basically texfiles with lists of commands, just as your textfile. How to download? How can I see the progress of download also in log file? Wget -q -nH -no-check-certificate -cut-dirs=5 -r -l0 -c -N -np -R 'index*' -erobots=off -retr-symlinks wget -no-verbose turns off log messag\es but displays error messages.I have list of wget command as shown below: wget -q -nH -no-check-certificate -cut-dirs=5 -r -l0 -c -N -np -R 'index*' -erobots=off -retr-symlinks.wget -v explicitly enables wget’s default of verbose output.wget -q turns off all of wget’s output, including error messages.wget -o path/to/log.txt enables logging output to the specified directory instead of displaying the log-in standard output.Finally select the 'PATH' variable and append to the list follwoing a ' ' 'WGETHOME' and OK your way back. You can also consider the following flags as a partial way to control the output you receive when using wget. So open start menu and search variables, open the link for 'Edit System Environment Variables' and add a new user variable (I called it 'WGETHOME' and its value the directory 'C:Toolswget' ). Wget can do more than control the download process, as you can also create logs for future reference. wget -t 10 will try to download the resource up to 10 times before failing.wget -c/ wget -continue will continue downloads of partially downloaded files.The article will guide you through the whole process. wget -nc/ wget -no-clobber will not overwrite files that already exist in the destination. The free, cross-platform command line utility called wget can download an entire website.This input file must be in HTML format, or you’ll need to use the -force-html flag to parse the HTML. wget -i file specifies target URLs from an input file.This would skip all files with the PNG extension. The asterisk (*) is a wildcard, such as “*.png”. In this case, it will exclude all the index files. wget -R index.html/ wget -reject index.html will skip any files matching the specified file name.For example, -nH -cut-dirs=1 would change the specified path of “/pub/xemacs/” into simply “/xemacs/” and reduce the number of empty parent directories in the local download. wget -cut-dirs=# skips the specified number of directories down the URL before starting to download files.

For example, wget would skip the folder in the previous example and start with the History directory instead.

In other words, it skips over the primary domain name. wget -nH removes the “hostname” directories.wget -X /absolute/path/to/directory will exclude a specific directory on the remote server.

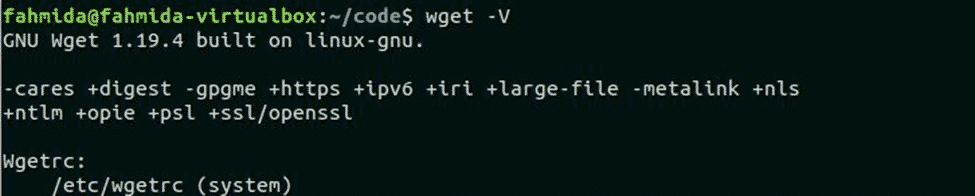

There are many flags to help you set up the download process. Let’s take a look at two areas in our focus on controlling the download process and creating logs. At the time of writing, the latest Wget Windows version is 1.21.6. Aside from being built-in with Unix-based OS, the wget command also has a version built for Windows OS. Wget is a non-interactive command, which means no user login is required and can be run in the background. Wget is a non-interactive utility to download remote files from the internet. It supports standard web protocols like HTTP, HTTPS, and FTP, as well as retrieval data through HTTP proxies. This is great if you have specific requirements for your download. GNU Wget is a free command-line utility for downloading files from the Web. You’ll find that wget is a flexible tool, as it uses a number of other additional flags. In general, it’s a good idea to disable robots.txt to prevent abridged downloads. This ignores restrictions in the robots.txt file.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed